.jpg)

A legal director interviews his AI assistant: “What is the non-competition clause in our contract with supplier X?” The AI is responding confidently. Clause cited, article specified, deadline mentioned. Perfect. Except the clause doesn't exist. Artificial intelligence has just invented information that is plausible... and totally false.

It's an AI hallucination.

And in business, it's not forgiving: wrong decision, non-compliant contract, failed audit. 96% of French organizations believe that their data is not ready for AI (2026 DSI Data & IA Barometer). However, 21% are deploying anyway. The result: failures, lost budgets, trust destroyed.

The good news? AI hallucinations are not inevitable. They happen when artificial intelligence lacks structured, reliable, and usable data. Structure your data, and you drastically reduce errors. This article shows you how.

The main thing to remember

- Problem : An AI hallucination is a false but credible response generated by a language model. In business, it compromises compliance and decision-making.

- Cause : 75% of organizations have not secured their documentary foundations (DSI Data & IA Barometer 2026): unstructured data, lack of metadata, poorly populated RAG.

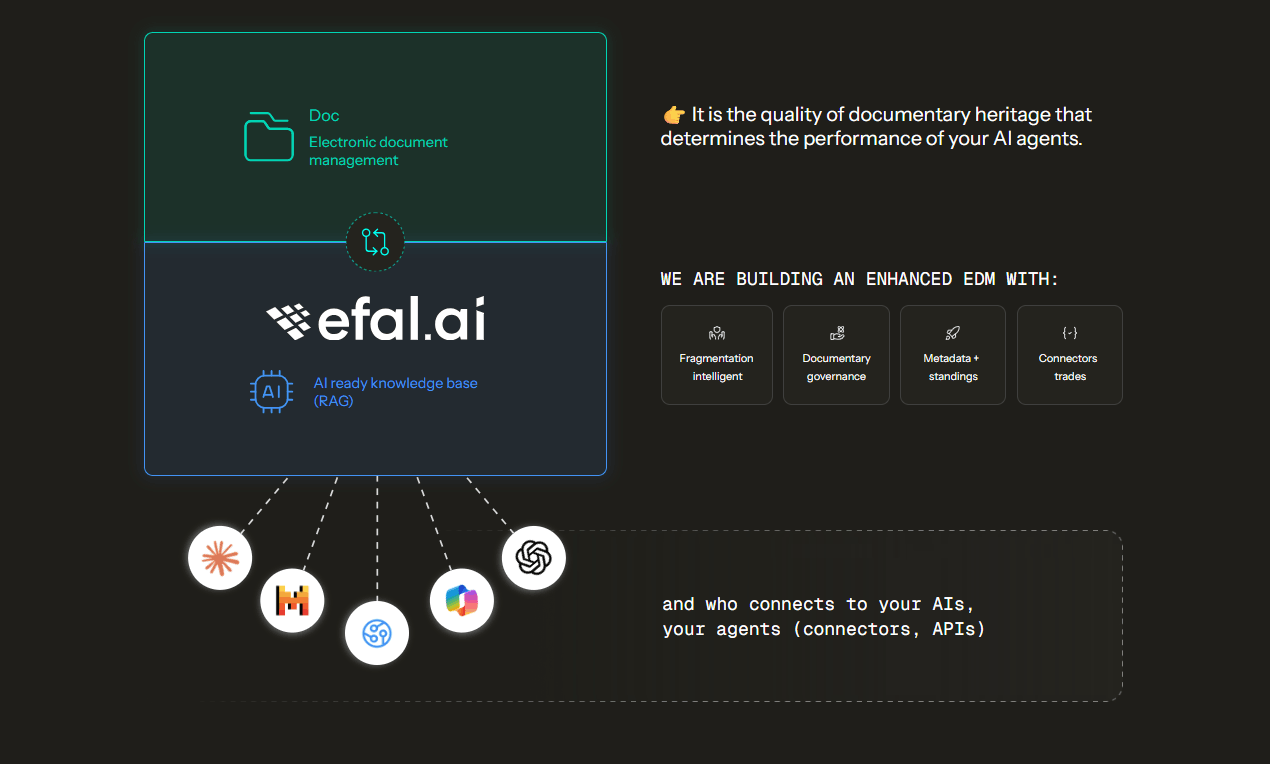

- Solution : An IA-ready EDM (structured data + rich metadata + governance) improves the accuracy of responses by 30% and reduces hallucinations.

- Efalia method : Intelligent fragmentation, vector agnostic base, centralized governance, native business connectors. Result: +30% relevance, +35% quality on complex corpora.

What is an AI hallucination and how does it happen?

AI hallucination definition:

An AI hallucination is when a language model (LLM) generates false but perfectly plausible information. The AI is responding confidently. She cites sources, gives figures, structures her response. But that is not true.

Why? Because an LLM doesn't “know” anything. It predicts. It calculates statistical probabilities based on training data. If reliable data is lacking, he invents. Not maliciously. By design.

The mechanism is simple: you ask a question. The AI searches its knowledge base (or your documentary base if you use a RAG — Retrieval-Augmented Generation system). If it can't find anything relevant, or if the documents are poorly structured, it still generates a response. Statistically probable. Factually false.

AI error statistics

The numbers speak for themselves: according to the STARK benchmark (Wu, 2024), the “Hit @1" rate — probability that the first answer is the right one — tops out at 18% in certain contexts. In other words, in 82% of cases, the first answer is not reliable. And if your documentary base is dirty, fragmented, or incomplete, you fall into that red zone.

Let's take a concrete example. Your AI agent should check a non-competition clause. Your document database contains 500 unstructured PDF contracts. No metadata (type of contract, signed status, BU concerned). No transversal ranking plan. The AI can't find the clause. She's inventing one. You are signing a non-compliant contract. You're flirting with legal risk.

This is where it all comes into play: generative AI is only reliable if it is based on structured and governed data.

Why AI hallucinations are a problem in business

A clause invented by an LLM is a non-compliant contract. An incorrect number in a business plan is a flawed strategic decision. An incorrect supplier status is a failed audit. Hallucinations are not trivial. They compromise three pillars of the business.

1. Regulatory compliance

Your AI cites an outdated version of an ISO quality procedure. The auditor picks it up. You lose your certification. Or worse: your AI agent indicates that a GDPR document is compliant when mandatory information is missing. You are in violation.

The problem : 85% of organizations do not check the quality of their documentary data on a regular basis (DSI Data & IA Barometer 2026). 29% have no control, 56% do occasional cleaning. Result: multiple versions, unclear statuses, unknown validity dates. The AI doesn't know which version is the right one. She guesses. And she is wrong.

2. Decision-making

A managing director asks for a summary on a strategic project. AI aggregates documents from all departments. Except that it mixes a draft business plan (not validated) with a management committee report (validated). The CEO makes a decision based on erroneous data.

The reality on the ground: 53% of CIOs cite “poor quality of results” as the main risk of AI (DSI Data & IA Barometer 2026). It is not a theoretical risk. It's a daily reality for more than half of organizations that deploy AI.

3. Business trust and Shadow IT

47% of organizations score 0 or 1 out of 5 in preparation for Shadow IT AI (2026 DSI Data & IA Barometer). In other words, they do not control what their employees do with external AI tools (ChatGPT, Gemini, Claude). Result: frequent hallucinations, repeated mistakes, rejection of AI by professions.

A lawyer who gets three times the wrong answer stops using the AI agent. He goes back to his emails. You lost the adoption. Good luck relaunching an AI project after that.

18% of organizations don't know where their AI data is located (2026 DSI Data & IA Barometer). It's hard to control hallucinations when you don't even know where the data that feeds them is.

The 3 main causes of AI hallucinations

There are three reasons for hallucinations. Good news: two are entirely under your control. Bad news: 75% of businesses don't know them.

Cause 1: Unstructured or dirty data

The findings of the 2026 DSI Data & IA Barometer are indisputable:

- 75% of organizations did not secure their documentary foundations (initial or emerging life cycle)

- 57% are still filing manually their documents

- 85% have no control over quality of their documentary heritage (no control or occasional cleaning)

- 56% did not go beyond basic search (manual search or basic search engine)

Concretely, what does that mean? Duplicates (Dupont SA/DUPONT/Dupont SAS = three different entries for the same supplier). Missing metadata (type of document unknown, validity date absent, status unclear). Scanned documents that are not indexed (PDF image without OCR). Multiple versions with no indication of which one is valid.

As a result, the AI does not know which source is reliable. It combines draft and signed versions. She is quoting an obsolete document. She invents to fill in the holes.

Cause 2: Lack of reliable context (poorly powered RAG)

You may have deployed a Retrieval-Augmented Generation (RAG) system. This is the mechanism that allows an AI to consult YOUR documents before responding, instead of relying solely on its training memory. It's like giving a manual to an expert rather than asking them to remember what they read two years ago.

But a RAG only works if it is powered by structured and governed data. Problem: only 13% of organizations use a chatbot or a RAG (2026 DSI Data & IA Barometer). And among them, how many have actually secured their documentary foundations? Very few.

Four concrete problems make an RAG ineffective :

1. Inappropriate fragmentation: Your PDF documents are divided into blocks of 500 characters without semantic logic. The AI retrieves fragments out of context. It reconstructs an answer from incoherent pieces. Poor fragmentation can cause the relevance rate to fall below 20% (Wu, 2024).

2. Lack of usable metadata: The AI cannot filter by BU, by validity date, by signed/draft status. She treats everything equally. The draft contract has as much weight as the signed version. She is hallucinating on the basis of obsolete or unvalidated documents.

3. SharePoint: distributed governance: SharePoint works well for collaboration, but not for a corporate RAG. Each space can have its own rules, its own metadata. No transversal ranking plan imposed. No unified traceability. Result: the AI mixes versions, ignores rights, cites invalid documents.

4. Lack of AI governance: 24% of organizations have not implemented any elements of AI governance (DSI Data & IA Barometer 2026). No policy, no traceability, no record of use cases. AI accesses everything, without filters, without control. You're flirting with the risk of data breaches and uncontrolled hallucinations.

Cause 3: Intrinsic limitations of the model

Some models hallucinate more than others. It is a fact. But this cause is not controllable by the company. You can't rewrite GPT-4 or Claude. On the other hand, you can structure your data so that any model — even an imperfect one — works better.

Focus action: Causes 1 and 2 are within your control. Structure your data, enrich your metadata, intelligently fragment your documents, set up a minimal governance framework. You drastically reduce hallucinations regardless of the model used.

How to prevent hallucinations with an AI-ready GED

The solution is not to change the AI model. It's about structuring your data so that any model can use it without inventing it. An IA-ready EDM is based on 5 technical pillars which, together, drastically reduce hallucinations.

👉 Discover how Efalia turns your EDM into an IA-ready knowledge base : EDM connected to AI

Pillar 1: Contextual Fragmentation

How does that prevent hallucinations? Your documents are divided into coherent semantic blocks (not brutal pieces of 500 characters). A contract is fragmented by clause. A step-by-step quality procedure. A report by decision. The AI retrieves the right context, not an above ground fragment.

The impact: Poor fragmentation causes relevance to fall below 20% (Wu, 2024). Contextual fragmentation ensures that each response is based on a complete block of meaning. Result: -40% latency, accurate responses without hallucinations.

Pillar 2: Strengthened documentary governance

How does that prevent hallucinations? The AI should never respond from a document that is obsolete, not validated, or intended for another service. Governance requires: fine management of rights (user, group, role), complete traceability of consultations, life cycle policy (status, deadline alerts, compliant archiving), RGPD and ISO 27001 compliance.

The impact: A lawyer who asks the AI about a confidential contract will only see the documents to which he has access. The AI automatically filters by rights. No leaks. No response based on an unvalidated draft. You respect compliance.

Pillar 3: Metadata and transversal classification plan

How does that prevent hallucinations? Metadata is the grammar of AI. They make it possible to filter the results according to objective criteria (type of document, BU, validity date, signed/draft status), to prioritize the sources (signed version > draft), to limit hallucinations thanks to the context.

The impact: Metadata enrichment improves the accuracy of responses by 30% (53% to 83% relevance, Earley 2023). Rich metadata + hybrid queries = +35% quality on complex corpora (Jing, 2024). The AI knows which version is valid. She can't guess anymore.

The SharePoint problem: SharePoint operates in distributed governance. Each team has its metadata, its rules, its structures. No transverse plane. No unified traceability. The AI is hallucinating, mixing versions, ignoring rights. A transversal GED requires a unique ranking plan. AI accesses a coherent vision of the business.

Pillar 4: Interoperability and business connectors

How does that prevent hallucinations? Your documents are paid automatically from your business tools (pay slips from SIRH, invoices from ERP, contracts from CRM). They arrive with their enriched metadata, their synchronized rights, their validated status. AI has access to an updated, governed, transversal base.

The impact: No more documents scattered between SharePoint, shared disks, and email. AI questions a single source of truth. The rights are synchronized with the company directory. Each document has its business context. You eliminate the hallucinations associated with outdated or unverified data.

Pillar 5: Vector agnostic base

How does that prevent hallucinations? Your documents are transformed into mathematical representations (vectors) stored in an agnostic database. You are testing GPT, Claude, Mistral, Llama without redesign. If a model hallucinates too much, you switch. You avoid technological confinement.

The impact: You don't depend on any AI provider. Your knowledge base remains usable in the long term. If GPT-5 is better than GPT-4, you integrate it without losing your data. You control the chain from start to finish.

The barometer's findings: 29% of CIOs cite “supplier dependency” as a risk. Only 5% use a sovereign platform. The discourse on sovereignty is not yet being translated into practice. A vector-agnostic base protects you.

Checklist: 5 foundations to limit hallucinations

☐ Contextual fragmentation (semantic blocks, not brutal division)

☐ Documentary governance (rights, traceability, life cycle, compliance)

☐ Metadata + transversal filing plan (AI grammar, +30% accuracy)

☐ Business connectors (automatic payment, on-the-fly enrichment, synchronized rights)

☐ Agnostic vector base (independence, sovereignty, avoid vendor lock-in)

Conclusion: clean data = reliable AI

AI is not magic. She does not invent maliciously. She predicts based on what you give her. If you inject “dirty” or unstructured data into the knowledge base, you will have dirty and unstructured data indexed. And hallucinations.

The good news: you control two of the three main causes of hallucinations. Structure your data. Enrich your metadata. Fragment your documents intelligently. Set up a minimal governance framework (2 elements are enough to become an accelerator). You drastically reduce errors. You go from 53% to 83% in relevance (Earley, 2023). You gain +35% in quality on complex corpora (Jing, 2024).

The diagnosis of French CIOs is clear (2026 DSI Data & IA Barometer): 68% place “data governance and quality” as the No. 1 priority for 2026. They are not wrong. Data before AI. Without solid documentary foundations, artificial intelligence hallucinates.

The 2026 challenge: transform your electronic document management from a simple filing tool into the strategic foundation of your artificial business intelligence. Companies that are structuring today are gaining a sustainable competitive advantage. The others are hallucinating.